|

|

Home | Switchboard | Unix Administration | Red Hat | TCP/IP Networks | Neoliberalism | Toxic Managers |

| (slightly skeptical) Educational society promoting "Back to basics" movement against IT overcomplexity and bastardization of classic Unix | |||||||

| News | HTTP Protocol | Books | Recommended Links | Curl config file | |

| FTP Filesystems | HTTP filesystems | NetDrive | |||

| lftp | wget | Admin Horror Stories | Unix History | Humor |

|

|

|

|

Curl is a command line tool the main value of which is that it permit scripting HTTP requests, especially emulating browser for submission of forms. It also can be used for pages retrieval competing in this are with wget. If can be used from Perl using libcurl wrapper. See WWWCurl - Perl extension interface for libcurl (also at libcurl - the Perl Binding)

Use curl --help or curl --manual to get basic information about it.

Curl makes the requests, it gets the data, it sends data and it retrieves the information. You probably need to glue everything together using some kind of script language or repeated manual invokes.

Using curl's option -v will display what kind of commands curl sends to the server, as well as a few other informational texts. -v is the single most useful option when it comes to debug or even understand the curl-server interaction.

| Using curl's option -v will display what kind of commands curl sends to the server,

as well as a few other informational texts.

-v is the single most useful option when it comes to debug or even understand the curl-server interaction. |

The simplest and most common request/operation made using HTTP is to get a URL. The URL could itself refer to a web page, an image or a file. The client issues a GET request to the server and receives the document it asked for. If you issue the command line

curl http://curl.haxx.se

you get a web page returned in your terminal window. The entire HTML document that that URL holds.

All HTTP replies contain a set of headers that are normally hidden, use curl's -i option to display them as well as the rest of the document. You can also ask the remote server for ONLY the headers by using the -I option (which will make curl issue a HEAD request).

Although yum itself may use http_proxy in either upper-case or lower-case,

curl requires the name of the variable to be in lower-case.

Curl automatically tries to read the .curlrc file (or _curlrc file on win32 systems) from the user's home dir on startup.

Suse automatically generate this file for root account based on proxy settings specified.The config file could be made up with normal command line switches. You can also specify the long options without the dashes to make it more readable.

You can separate the options and the parameter with spaces, or with = or :

Comments can be used within the file. If the first letter on a line is a '#'-letter the rest of the line is treated as a comment.

If you want the parameter to contain spaces, you must enclose the entire parameter within double quotes ("). Within those quotes, you specify a quote as \".

NOTE: You must specify options and the option's arguments on the same line.

Example, set default time out and proxy in a config file:

#We want a 30 minute timeout:

-m 1800

#. .. and we use a proxy for all accesses:

proxy = proxy.our.domain.com:8080

White spaces ARE significant at the end of lines, but all white spaces leading up to the first characters of each line are ignored.

Prevent curl from reading the default file by using -q as the first command line parameter, like:

curl -q http://www.thatsite.com

Force curl to get and display a local help page in case it is invoked without URL by making a config file similar to:

#default url to get url = "http://help.with.curl.com/curlhelp.html"

You can specify another config file to be read by using the -K/--config flag. If you set config file name to "-" it'll read the config from stdin, which can be handy if you want to hide options from being visible in process tables etc:

echo "user = user:passwd" | curl -K - http://that.secret.site.comTo get header use -I : when used, CURL prints only the server response’s HTTP headers, instead of the page data.

curl -I ...Also useful is -L : if the initial web server response indicates that the requested page has moved to a new location (redirect), CURL’s default behavior is not to request the page at that new location, but just print the HTTP error message. This switch instructs CURL to make another request asking for the page at the new location whenever the web server returns a 3xx HTTP code.

Authentication is the ability to tell the server your username and password so that it can verify that you're allowed to do the request you're doing. The Basic authentication used in HTTP (which is the type curl uses by default) is *plain* *text* based, which means it sends username and password only slightly obfuscated, but still fully readable by anyone that sniffs on the network between you and the remote server.

To tell curl to use a user and password for authentication:

curl -u name:password www.secrets.com

The site might require a different authentication method (check the headers returned by the server), and then --ntlm, --digest, --negotiate or even --anyauth might be options that suit you. Sometimes your HTTP access is only available through the use of a HTTP proxy. This seems to be especially common at various companies. A HTTP proxy may require its own user and password to allow the client to get through to the Internet. To specify those with curl, run something like:

curl -U proxyuser:proxypassword curl.haxx.se

If your proxy requires the authentication to be done using the NTLM method, use --proxy-ntlm, if it requires Digest use --proxy-digest.

If you use any one these user+password options but leave out the password part, curl will prompt for the password interactively.

Do note that when a program is run, its parameters might be possible to see when listing the running processes of the system. Thus, other users may be able to watch your passwords if you pass them as plain command line options. There are ways to circumvent this.

Forms are the general way a web site can present a HTML page with fields for the user to enter data in, and then press some kind of 'OK' or 'submit' button to get that data sent to the server. The server then typically uses the posted data to decide how to act. Like using the entered words to search in a database, or to add the info in a bug track system, display the entered address on a map or using the info as a login-prompt verifying that the user is allowed to see what it is about to see.

Of course there has to be some kind of program in the server end to receive the data you send. You cannot just invent something out of the air.

A GET-form uses the method GET, as specified in HTML like:

<form method="GET" action="junk.cgi">

<input type=text name="birthyear">

<input type=submit name=press value="OK">

</form>

In your favorite browser, this form will appear with a text box to fill in and a press-button labeled "OK". If you fill in '1905' and press the OK button, your browser will then create a new URL to get for you. The URL will get "junk.cgi?birthyear=1905&press=OK" appended to the path part of the previous URL.

If the original form was seen on the page "www.hotmail.com/when/birth.html", the second page you'll get will become "www.hotmail.com/when/junk.cgi?birthyear=1905&press=OK".

Most search engines work this way.

To make curl do the GET form post for you, just enter the expected created URL:

curl "www.hotmail.com/when/junk.cgi?birthyear=1905&press=OK"

The GET method makes all input field names get displayed in the URL field of your browser. That's generally a good thing when you want to be able to bookmark that page with your given data, but it is an obvious disadvantage if you entered secret information in one of the fields or if there are a large amount of fields creating a very long and unreadable URL.

The HTTP protocol then offers the POST method. This way the client sends the data separated from the URL and thus you won't see any of it in the URL address field.

The form would look very similar to the previous one:

<form method="POST" action="junk.cgi">

<input type=text name="birthyear">

<input type=submit name=press value=" OK ">

</form>

And to use curl to post this form with the same data filled in as before, we could do it like:

curl -d "birthyear=1905&press=%20OK%20" www.hotmail.com/when/junk.cgi

This kind of POST will use the Content-Type application/x-www-form-urlencoded and is the most widely used POST kind.

The data you send to the server MUST already be properly encoded, curl will not do that for you. For example, if you want the data to contain a space, you need to replace that space with %20 etc. Failing to comply with this will most likely cause your data to be received wrongly and messed up.

Back in late 1995 they defined an additional way to post data over HTTP. It is documented in the RFC 1867, why this method sometimes is referred to as RFC1867-posting.

This method is mainly designed to better support file uploads. A form that allows a user to upload a file could be written like this in HTML:

<form method="POST" enctype='multipart/form-data' action="upload.cgi"> <input type=file name=upload> <input type=submit name=press value="OK"> </form>

This clearly shows that the Content-Type about to be sent is multipart/form-data.

To post to a form like this with curl, you enter a command line like:

curl -F upload=@localfilename -F press=OK [URL]

A very common way for HTML based application to pass state information between pages is to add hidden fields to the forms. Hidden fields are already filled in, they aren't displayed to the user and they get passed along just as all the other fields.

A similar example form with one visible field, one hidden field and one submit button could look like:

<form method="POST" action="foobar.cgi"> <input type=text name="birthyear"> <input type=hidden name="person" value="daniel"> <input type=submit name="press" value="OK"> </form>

To post this with curl, you won't have to think about if the fields are hidden or not. To curl they're all the same:

curl -d "birthyear=1905&press=OK&person=daniel" [URL]

When you're about fill in a form and send to a server by using curl instead of a browser, you're of course very interested in sending a POST exactly the way your browser does.

An easy way to get to see this, is to save the HTML page with the form on your local disk, modify the 'method' to a GET, and press the submit button (you could also change the action URL if you want to).

You will then clearly see the data get appended to the URL, separated with a '?'-letter as GET forms are supposed to.

The perhaps best way to upload data to a HTTP server is to use PUT. Then again, this of course requires that someone put a program or script on the server end that knows how to receive a HTTP PUT stream.

Put a file to a HTTP server with curl:

curl -T uploadfile www.uploadhttp.com/receive.cgi

A HTTP request may include a 'referer' field (yes it is misspelled), which can be used to tell from which URL the client got to this particular resource. Some programs/scripts check the referer field of requests to verify that this wasn't arriving from an external site or an unknown page. While this is a stupid way to check something so easily forged, many scripts still do it. Using curl, you can put anything you want in the referer-field and thus more easily be able to fool the server into serving your request.

Use curl to set the referer field with:

curl -e http://curl.haxx.se daniel.haxx.se

Very similar to the referer field, all HTTP requests may set the User-Agent field. It names what user agent (client) that is being used. Many applications use this information to decide how to display pages. Silly web programmers try to make different pages for users of different browsers to make them look the best possible for their particular browsers. They usually also do different kinds of javascript, vbscript etc.

At times, you will see that getting a page with curl will not return the same page that you see when getting the page with your browser. Then you know it is time to set the User Agent field to fool the server into thinking you're one of those browsers.

To make curl look like Internet Explorer on a Windows 2000 box:

curl -A "Mozilla/4.0 (compatible; MSIE 5.01; Windows NT 5.0)" [URL]

Or why not look like you're using Netscape 4.73 on a Linux (PIII) box:

curl -A "Mozilla/4.73 [en] (X11; U; Linux 2.2.15 i686)" [URL]

When a resource is requested from a server, the reply from the server may include a hint about where the browser should go next to find this page, or a new page keeping newly generated output. The header that tells the browser to redirect is Location:.

Curl does not follow Location: headers by default, but will simply display such pages in the same manner it display all HTTP replies. It does however feature an option that will make it attempt to follow the Location: pointers.

To tell curl to follow a Location:

curl -L www.sitethatredirects.com

If you use curl to POST to a site that immediately redirects you to another page, you can safely use -L and -d/-F together. Curl will only use POST in the first request, and then revert to GET in the following operations.

The way the web browsers do "client side state control" is by using cookies. Cookies are just names with associated contents. The cookies are sent to the client by the server. The server tells the client for what path and host name it wants the cookie sent back, and it also sends an expiration date and a few more properties.

When a client communicates with a server with a name and path as previously specified in a received cookie, the client sends back the cookies and their contents to the server, unless of course they are expired.

Many applications and servers use this method to connect a series of requests into a single logical session. To be able to use curl in such occasions, we must be able to record and send back cookies the way the web application expects them. The same way browsers deal with them.

The simplest way to send a few cookies to the server when getting a page with curl is to add them on the command line like:

curl -b "name=Daniel" www.cookiesite.com

Cookies are sent as common HTTP headers. This is practical as it allows curl to record cookies simply by recording headers. Record cookies with curl by using the -D option like:

curl -D headers_and_cookies www.cookiesite.com

(Take note that the -c option described below is a better way to store cookies.)

Curl has a full blown cookie parsing engine built-in that comes to use if you want to reconnect to a server and use cookies that were stored from a previous connection (or handicrafted manually to fool the server into believing you had a previous connection). To use previously stored cookies, you run curl like:

curl -b stored_cookies_in_file www.cookiesite.com

Curl's "cookie engine" gets enabled when you use the -b option. If you only want curl to understand received cookies, use -b with a file that doesn't exist. Example, if you want to let curl understand cookies from a page and follow a location (and thus possibly send back cookies it received), you can invoke it like:

curl -b nada -L www.cookiesite.com

Curl has the ability to read and write cookie files that use the same file format that Netscape and Mozilla do. It is a convenient way to share cookies between browsers and automatic scripts. The -b switch automatically detects if a given file is such a cookie file and parses it, and by using the -c/--cookie-jar option you'll make curl write a new cookie file at the end of an operation:

curl -b cookies.txt -c newcookies.txt www.cookiesite.com

There are a few ways to do secure HTTP transfers. The by far most common protocol for doing this is what is generally known as HTTPS, HTTP over SSL. SSL encrypts all the data that is sent and received over the network and thus makes it harder for attackers to spy on sensitive information.

SSL (or TLS as the latest version of the standard is called) offers a truckload of advanced features to allow all those encryptions and key infrastructure mechanisms encrypted HTTP requires.

Curl supports encrypted fetches thanks to the freely available OpenSSL libraries. To get a page from a HTTPS server, simply run curl like:

curl https://that.secure.server.com

In the HTTPS world, you use certificates to validate that you are the one you you claim to be, as an addition to normal passwords. Curl supports client-side certificates. All certificates are locked with a pass phrase, which you need to enter before the certificate can be used by curl. The pass phrase can be specified on the command line or if not, entered interactively when curl queries for it. Use a certificate with curl on a HTTPS server like:

curl -E mycert.pem https://that.secure.server.com

curl also tries to verify that the server is who it claims to be, by verifying the server's certificate against a locally stored CA cert bundle. Failing the verification will cause curl to deny the connection. You must then use -k in case you want to tell curl to ignore that the server can't be verified.

More about server certificate verification and ca cert bundles can be read in the SSLCERTS document, available online here:

http://curl.haxx.se/docs/sslcerts.html

Doing fancy stuff, you may need to add or change elements of a single curl request.

For example, you can change the POST request to a PROPFIND and send the data as "Content-Type: text/xml" (instead of the default Content-Type) like this:

curl -d "<xml>" -H "Content-Type: text/xml" -X PROPFIND url.com

You can delete a default header by providing one without content. Like you can ruin the request by chopping off the Host: header:

curl -H "Host:" http://mysite.com

You can add headers the same way. Your server may want a "Destination:" header, and you can add it:

curl -H "Destination: http://moo.com/nowhere" http://url.com

Many times when you run curl on a site, you'll notice that the site doesn't seem to respond the same way to your curl requests as it does to your browser's.

Then you need to start making your curl requests more similar to your browser's requests:

A very good helper to make sure you do this right, is the LiveHTTPHeader tool that lets you view all headers you send and receive with Mozilla/Firefox (even when using HTTPS).

A more raw approach is to capture the HTTP traffic on the network with tools such as ethereal or tcpdump and check what headers that were sent and received by the browser. (HTTPS makes this technique inefficient.)

October 6, 2007 | Linux by Examples | 34 Comments

The title doesn't sound interesting if you have no idea what is curl. Why we need to use curl to access ftp server, if we can access ftp with tools like ftp in console or gFTP?

Well gFTP is a very handy ftp client with gtk front end, as I use it daily to maintain my files in my web servers. But sometimes we need a command that allows us to put into script, then gFTP is not suitable for that. And default ftp command surprise me that we cannot do things inline. Let say, I wanna download a file from a ftp server by passing the username and password within one line of command, so I can put into my script. I can't do this with default ftp command!

Curl provides you a way to access ftp server and download, upload files, listing directories and file, and you can write your routine into a script using curl.

Lets look at how we can do it with curl.

The simplest way to access a ftp server with username and passwordcurl ftp://myftpsite.com --user myname:mypasswordWith the command line above, curl will try to connect to the ftp server and list all the directories and files in the ftp home directory.

To download a file from ftp server

curl ftp://myftpsite.com/mp3/mozart_piano_sonata.zip --user myname:mypassword -o mozart_piano_sonata.zipTo upload a file to ftp server

curl -T koc_dance.mp3 ftp://myftpsite.com/mp3/ --user myname:mypasswordTo list files in sub directories.

curl ftp://myftpsite.com/mp3/ --user myname:mypasswordList only directories, silent the curl progress bar, and use grep to filter

curl ftp://myftpsite.com --user myname:mypassword -s | grep ^dRemove files from ftp server.

This is a bit tricky, because curl do not support that by default, well anyway, you can make use of -X and pass in the REAL FTP command.

(Check out a list of FTP service Command in rfc 959, under 4.1.3. FTP SERVICE COMMANDS)curl ftp://myftpsite.com/ -X 'DELE mp3/koc_dance.mp3' --user myname:mypasswordCaution: Make sure you are know what are you deleting! It will not prompts 'Are you sure?' confirmation.

Check out curl manual for more,

man curlor this, ^^ (contains different info)

curl --manual | less

|

|

Switchboard | ||||

| Latest | |||||

| Past week | |||||

| Past month | |||||

"00:00

Use the -L Flag to Follow RedirectsThis is a good time to learn about the This is a good time to learn about the redirect option with the curl command : curl -L bytexd. com Notice how we didn't have to specify https:// like we did previously. curl -L bytexd. com Notice how we didn't have to specify https:// like we did previously. Notice how we didn't have to specify https:// like we did previously. https:// like we did previously. The -L flag or --location option follows the redirects. Use this to display the contents of any website you want. By default, the curl command with the -L flag The -L flag or --location option follows the redirects. Use this to display the contents of any website you want. By default, the curl command with the -L flag -L flag or --location option follows the redirects. Use this to display the contents of any website you want. By default, the curl command with the -L flag will follow up to 50 redirects .

"00:00

"00:00

"00:00

Downloading Multiple filesYou can download multiple files together using multiple -O flags. Here's an example where we download both of the files we used as examples previously: You can download multiple files together using multiple -O flags. Here's an example where we download both of the files we used as examples previously: -O flags. Here's an example where we download both of the files we used as examples previously:

"00:00

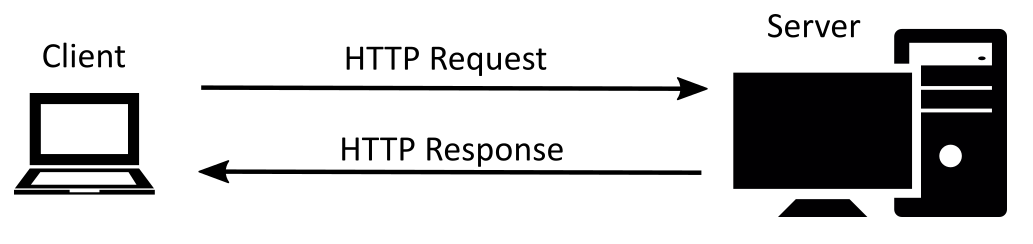

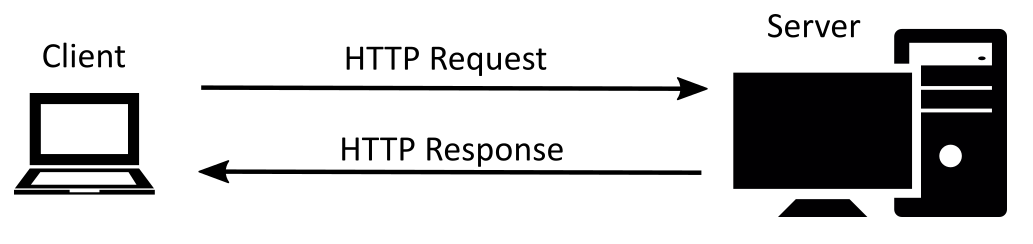

Resuming DownloadsIf you cancel some downloads midway, you can resume them by using the -C - option: If you cancel some downloads midway, you can resume them by using the -C - option: -C - option: Basics of HTTP Requests & Responses We need to learn some basics of the HTTP Requests & Responses before we can perform them with cURL efficiently. We need to learn some basics of the HTTP Requests & Responses before we can perform them with cURL efficiently. HTTP Requests & Responses before we can perform them with cURL efficiently.

Whenever your browser is loading a page from any website, it performs HTTP requests. It is a client-server model.

Whenever your browser is loading a page from any website, it performs HTTP requests. It is a client-server model. Extract the HTTP Header with curlThe header is not shown when you perform GET requests with cURL. For example, shis command will only output the message body without the HTTP header. curl example. com To see only the header, we use the -I flag or the --head option. The header is not shown when you perform GET requests with cURL. For example, shis command will only output the message body without the HTTP header. curl example. com To see only the header, we use the -I flag or the --head option. For example, shis command will only output the message body without the HTTP header. curl example. com To see only the header, we use the -I flag or the --head option. For example, shis command will only output the message body without the HTTP header. curl example. com To see only the header, we use the -I flag or the --head option. curl example. com To see only the header, we use the -I flag or the --head option. To see only the header, we use the -I flag or the --head option. -I flag or the --head option.

Debugging with the HTTP HeadersNow let's find out why you might want to look at the headers. We'll run the following command: curl -I bytexd. com Remember we Now let's find out why you might want to look at the headers. We'll run the following command: curl -I bytexd. com Remember we curl -I bytexd. com Remember we Remember we couldn't redirect to bytexd.com without the -L flag? If you didn't include the -I flag there would've been no outputs. -L flag? If you didn't include the -I flag there would've been no outputs. With the -I flag you'll get the header of the response, which offers us some information: With the -I flag you'll get the header of the response, which offers us some information: -I flag you'll get the header of the response, which offers us some information: The code is 301 which indicates a redirect is necessary. As we mentioned before you can check HTTP status codes and their meanings here ( Wikipedia ) or here ( status codes associated with silly cat pictures ) If you want to see the communication between cURL and the server then turn on the verbose option with the -v flag: If you want to see the communication between cURL and the server then turn on the verbose option with the -v flag: If you want to see the communication between cURL and the server then turn on the verbose option with the -v flag: -v flag:

HTTP Header with the Redirect optionNow you might wonder what will happen if we use the redirect option -L with the Header only -I option. Let's try it out: Now you might wonder what will happen if we use the redirect option -L with the Header only -I option. Let's try it out: -L with the Header only -I option. Let's try it out: POST Requests We already mentioned that cURL performs the GET request method by default. For using other request methods need to use the -X or --request flag followed by the request method. We already mentioned that cURL performs the GET request method by default. For using other request methods need to use the -X or --request flag followed by the request method. -X or --request flag followed by the request method. Let's see an example: curl -X [ method ] [ more options ] [ URI ] For using the POST method we'll use: curl -X POST [ more options ] [ URI ] Let's see an example: curl -X [ method ] [ more options ] [ URI ] For using the POST method we'll use: curl -X POST [ more options ] [ URI ] curl -X [ method ] [ more options ] [ URI ] For using the POST method we'll use: curl -X POST [ more options ] [ URI ] For using the POST method we'll use: curl -X POST [ more options ] [ URI ] curl -X POST [ more options ] [ URI ]

Sending data using POST methodYou can use the -d or --data option to specify the data you want to send to the server. You can use the -d or --data option to specify the data you want to send to the server. -d or --data option to specify the data you want to send to the server. This flag sends data with the content type of application/x-www-form-urlencoded . This flag sends data with the content type of application/x-www-form-urlencoded . application/x-www-form-urlencoded . httpbin.org is free service HTTP request & response service and httpbin.org/post accepts POST requests and will help us better understand how requests are made. Here's an example with the -d flag: Here's an example with the -d flag: -d flag:

Uploading files with curlMultipart data can be sent with the -F or --form flag which uses the multipart/form-data or form content type. Multipart data can be sent with the -F or --form flag which uses the multipart/form-data or form content type. -F or --form flag which uses the multipart/form-data or form content type. You can also send files using this flag, and you'll also need to attach the @ prefix to attach a whole file. You can also send files using this flag, and you'll also need to attach the @ prefix to attach a whole file. @ prefix to attach a whole file.

Modify the HTTP Header with curlYou can use the -H or --header flag to change the header content when sending data to a server. This will allow us to send custom-made requests to the server. You can use the -H or --header flag to change the header content when sending data to a server. This will allow us to send custom-made requests to the server. -H or --header flag to change the header content when sending data to a server. This will allow us to send custom-made requests to the server. ( May 23, 2021 , bytexd.com )

"00:00

Use the -L Flag to Follow RedirectsThis is a good time to learn about the This is a good time to learn about the redirect option with the curl command : curl -L bytexd. com Notice how we didn't have to specify https:// like we did previously. curl -L bytexd. com Notice how we didn't have to specify https:// like we did previously. Notice how we didn't have to specify https:// like we did previously. https:// like we did previously. The -L flag or --location option follows the redirects. Use this to display the contents of any website you want. By default, the curl command with the -L flag The -L flag or --location option follows the redirects. Use this to display the contents of any website you want. By default, the curl command with the -L flag -L flag or --location option follows the redirects. Use this to display the contents of any website you want. By default, the curl command with the -L flag will follow up to 50 redirects .

"00:00

"00:00

"00:00

Downloading Multiple filesYou can download multiple files together using multiple -O flags. Here's an example where we download both of the files we used as examples previously: You can download multiple files together using multiple -O flags. Here's an example where we download both of the files we used as examples previously: -O flags. Here's an example where we download both of the files we used as examples previously:

"00:00

Resuming DownloadsIf you cancel some downloads midway, you can resume them by using the -C - option: If you cancel some downloads midway, you can resume them by using the -C - option: -C - option: Basics of HTTP Requests & Responses We need to learn some basics of the HTTP Requests & Responses before we can perform them with cURL efficiently. We need to learn some basics of the HTTP Requests & Responses before we can perform them with cURL efficiently. HTTP Requests & Responses before we can perform them with cURL efficiently.

Whenever your browser is loading a page from any website, it performs HTTP requests. It is a client-server model.

Whenever your browser is loading a page from any website, it performs HTTP requests. It is a client-server model. Extract the HTTP Header with curlThe header is not shown when you perform GET requests with cURL. For example, shis command will only output the message body without the HTTP header. curl example. com To see only the header, we use the -I flag or the --head option. The header is not shown when you perform GET requests with cURL. For example, shis command will only output the message body without the HTTP header. curl example. com To see only the header, we use the -I flag or the --head option. For example, shis command will only output the message body without the HTTP header. curl example. com To see only the header, we use the -I flag or the --head option. For example, shis command will only output the message body without the HTTP header. curl example. com To see only the header, we use the -I flag or the --head option. curl example. com To see only the header, we use the -I flag or the --head option. To see only the header, we use the -I flag or the --head option. -I flag or the --head option.

Debugging with the HTTP HeadersNow let's find out why you might want to look at the headers. We'll run the following command: curl -I bytexd. com Remember we Now let's find out why you might want to look at the headers. We'll run the following command: curl -I bytexd. com Remember we curl -I bytexd. com Remember we Remember we couldn't redirect to bytexd.com without the -L flag? If you didn't include the -I flag there would've been no outputs. -L flag? If you didn't include the -I flag there would've been no outputs. With the -I flag you'll get the header of the response, which offers us some information: With the -I flag you'll get the header of the response, which offers us some information: -I flag you'll get the header of the response, which offers us some information: The code is 301 which indicates a redirect is necessary. As we mentioned before you can check HTTP status codes and their meanings here ( Wikipedia ) or here ( status codes associated with silly cat pictures ) If you want to see the communication between cURL and the server then turn on the verbose option with the -v flag: If you want to see the communication between cURL and the server then turn on the verbose option with the -v flag: If you want to see the communication between cURL and the server then turn on the verbose option with the -v flag: -v flag:

HTTP Header with the Redirect optionNow you might wonder what will happen if we use the redirect option -L with the Header only -I option. Let's try it out: Now you might wonder what will happen if we use the redirect option -L with the Header only -I option. Let's try it out: -L with the Header only -I option. Let's try it out: POST Requests We already mentioned that cURL performs the GET request method by default. For using other request methods need to use the -X or --request flag followed by the request method. We already mentioned that cURL performs the GET request method by default. For using other request methods need to use the -X or --request flag followed by the request method. -X or --request flag followed by the request method. Let's see an example: curl -X [ method ] [ more options ] [ URI ] For using the POST method we'll use: curl -X POST [ more options ] [ URI ] Let's see an example: curl -X [ method ] [ more options ] [ URI ] For using the POST method we'll use: curl -X POST [ more options ] [ URI ] curl -X [ method ] [ more options ] [ URI ] For using the POST method we'll use: curl -X POST [ more options ] [ URI ] For using the POST method we'll use: curl -X POST [ more options ] [ URI ] curl -X POST [ more options ] [ URI ]

Sending data using POST methodYou can use the -d or --data option to specify the data you want to send to the server. You can use the -d or --data option to specify the data you want to send to the server. -d or --data option to specify the data you want to send to the server. This flag sends data with the content type of application/x-www-form-urlencoded . This flag sends data with the content type of application/x-www-form-urlencoded . application/x-www-form-urlencoded . httpbin.org is free service HTTP request & response service and httpbin.org/post accepts POST requests and will help us better understand how requests are made. Here's an example with the -d flag: Here's an example with the -d flag: -d flag:

Uploading files with curlMultipart data can be sent with the -F or --form flag which uses the multipart/form-data or form content type. Multipart data can be sent with the -F or --form flag which uses the multipart/form-data or form content type. -F or --form flag which uses the multipart/form-data or form content type. You can also send files using this flag, and you'll also need to attach the @ prefix to attach a whole file. You can also send files using this flag, and you'll also need to attach the @ prefix to attach a whole file. @ prefix to attach a whole file.

Modify the HTTP Header with curlYou can use the -H or --header flag to change the header content when sending data to a server. This will allow us to send custom-made requests to the server. You can use the -H or --header flag to change the header content when sending data to a server. This will allow us to send custom-made requests to the server. -H or --header flag to change the header content when sending data to a server. This will allow us to send custom-made requests to the server.

May 23, 2021 | bytexd.com

We can specify the content type using this header.

Let's send a JSON object with the

application/json content type: PUT RequestsThe

PUT request will update or replace the specified content.Perform the PUT request by using the

-X flag:We'll show another example with the PUT method sending raw JSON data:

curl -X PUT -H "Content-Type: application/json" -d '{"name":"bytexd"}' https ://httpbin.org/put DELETE RequestsYou can request the server to delete some content with the

DELETE request method.Here is the syntax for this request:

curl -X DELETE https ://httpbin.org/deleteLet's see what actually happens when we send a request like this. We can look at the status code by extracting the header:

curl -I -X DELETE https ://httpbin.org/delete HTTP/ 2 200 date: Mon, 17 May 2021 21 : 40 : 03 GMT content-type: application/json content-length: 319 server: gunicorn/ 19.9 . 0 access-control-allow-origin: * access-control-allow-credentials: trueHere we can see the status code is

200 which means the request was successful.

Jul 01, 2020 | opensource.com

curl

curl transfers a URL. Use this command to test an application's endpoint or connectivity to an upstream service endpoint. c url can be useful for determining if your application can reach another service, such as a database, or checking if your service is healthy.

As an example, imagine your application throws an HTTP 500 error indicating it can't reach a MongoDB database:

$ curl -I -s myapplication: 5000

HTTP / 1.0 500 INTERNAL SERVER ERRORThe -I option shows the header information and the -s option silences the response body. Checking the endpoint of your database from your local desktop:

$ curl -I -s database: 27017

HTTP / 1.0 200 OKSo what could be the problem? Check if your application can get to other places besides the database from the application host:

$ curl -I -s https: // opensource.com

HTTP / 1.1 200 OKThat seems to be okay. Now try to reach the database from the application host. Your application is using the database's hostname, so try that first:

$ curl database: 27017

curl: ( 6 ) Couldn 't resolve host ' database 'This indicates that your application cannot resolve the database because the URL of the database is unavailable or the host (container or VM) does not have a nameserver it can use to resolve the hostname.

Feb 25, 2017 | www.rosehosting.com

cURL is very useful command line tool to transfer data from or to a server. cURL supports various protocols like FILE, HTTP, HTTPS, IMAP, IMAPS, LDAP, DICT, LDAPS, TELNET, FTP, FTPS, GOPHER, RTMP, RTSP, SCP, SFTP, POP3, POP3S, SMB, SMBS, SMTP, SMTPS, and TFTP.cURL can be used in many different and interesting ways. With this tool you can download, upload and manage files, check your email address, or even update your status on some of the social media websites or check the weather outside. In this article will cover five of the most useful and basic uses of the cURL tool on any Linux VPS .

1. Check URL

One of the most common and simplest uses of cURL is typing the command itself, followed by the URL you want to check

curl https://domain.comThis command will display the content of the URL on your terminal

2. Save the output of the URL to a fileThe output of the cURL command can be easily saved to a file by adding the -o option to the command, as shown below

curl -o website https://domain.com % Total % Received % Xferd Average Speed Time Time Time Current Dload Upload Total Spent Left Speed 100 41793 0 41793 0 0 275k 0 --:--:-- --:--:-- --:--:-- 2.9MIn this example, output will be save to a file named 'website' in the current working directory.

3. Download files with cURLYou can downlaod files with cURL by adding the -O option to the command. It is used for saving files on the local server with the same names as on the remote server

curl -O https://domain.com/file.zipIn this example, the 'file.zip' zip archive will be downloaded to the current working directory.

You can also download the file with a different name by adding the -o option to cURL.

curl -o archive.zip https://domain.com/file.zipThis way the 'file.zip' archive will be downloaded and saved as 'archive.zip'.

cURL can be also used to download multiple files simultaneously, as shown in the example below

curl -O https://domain.com/file.zip -O https://domain.com/file2.zipcURL can be also used to download files securely via SSH using the following command

curl -u user sftp://server.domain.com/path/to/fileNote that you have to use the full path of the file you want to download

4. Get HTTP header information from a websiteYou can easily get HTTP header information from any website you want by adding the -I option (capital 'i') to cURL.

curl -I http://domain.com HTTP/1.1 200 OK Date: Sun, 16 Oct 2016 23:37:15 GMT Server: Apache/2.4.23 (Unix) X-Powered-By: PHP/5.6.24 Connection: close Content-Type: text/html; charset=UTF-85. Access an FTP serverTo access your FTP server with cURL use the following command

curl ftp://ftp.domain.com --user username:passwordcURL will connect to the FTP server and list all files and directories in user's home directory

You can download a file via FTP

curl ftp://ftp.domain.com/file.zip --user username:passwordand upload a file ot the FTP server

curl -T file.zip ftp://ftp.domain.com/ --user username:passwordYou can check cURL manual page to see all available cURL options and functionalities

man curl

Of course, if you use one of our Linux VPS Hosting services, you can always contact and ask our expert Linux admins (via chat or ticket) about cURL and anything related to cURL. They are available 24×7 and will provide information or assistance immediately.

PS. If you liked this post please share it with your friends on the social networks using the buttons below or simply leave a reply. Thanks.

March 22, 2015 | Unixlore.net

Anyway, using curl with FTP over SSL is usually done something like this:

curl -3 -v --cacert /etc/ssl/certs/cert.pem \ --ftp-ssl -T "/file/to/upload/file.txt" \ ftp://user:[email protected]:portLet's go over these options:

- -3: Force the use of SSL v3.

- -v: Gives verbose debugging output. Lines starting with '>' mean data sent by curl. Lines starting with '<' show data received by curl. Lines starting with '*' display additional information presented by curl.

- cacert: Specifies which file contains the SSL certificate(s) used to verify the server. This file must be in PEM format.

- ftp-ssl: Try to use SSL or TLS for the FTP connection. If the server does not support SSL/TLS, curl will fallback to unencrypted FTP.

- -T: Specifies a file to upload

The last part of the command line ftp://user:[email protected]:port is simply a way to specify the username, password, host and port all in one shot.

October 6, 2007 | Linux by Examples | 34 Comments

The title doesn't sound interesting if you have no idea what is curl. Why we need to use curl to access ftp server, if we can access ftp with tools like ftp in console or gFTP?

Well gFTP is a very handy ftp client with gtk front end, as I use it daily to maintain my files in my web servers. But sometimes we need a command that allows us to put into script, then gFTP is not suitable for that. And default ftp command surprise me that we cannot do things inline. Let say, I wanna download a file from a ftp server by passing the username and password within one line of command, so I can put into my script. I can't do this with default ftp command!

Curl provides you a way to access ftp server and download, upload files, listing directories and file, and you can write your routine into a script using curl.

Lets look at how we can do it with curl.

The simplest way to access a ftp server with username and passwordcurl ftp://myftpsite.com --user myname:mypasswordWith the command line above, curl will try to connect to the ftp server and list all the directories and files in the ftp home directory.

To download a file from ftp server

curl ftp://myftpsite.com/mp3/mozart_piano_sonata.zip --user myname:mypassword -o mozart_piano_sonata.zipTo upload a file to ftp server

curl -T koc_dance.mp3 ftp://myftpsite.com/mp3/ --user myname:mypasswordTo list files in sub directories.

curl ftp://myftpsite.com/mp3/ --user myname:mypasswordList only directories, silent the curl progress bar, and use grep to filter

curl ftp://myftpsite.com --user myname:mypassword -s | grep ^dRemove files from ftp server.

This is a bit tricky, because curl do not support that by default, well anyway, you can make use of -X and pass in the REAL FTP command.

(Check out a list of FTP service Command in rfc 959, under 4.1.3. FTP SERVICE COMMANDS)curl ftp://myftpsite.com/ -X 'DELE mp3/koc_dance.mp3' --user myname:mypasswordCaution: Make sure you are know what are you deleting! It will not prompts 'Are you sure?' confirmation.

Check out curl manual for more,

man curlor this, ^^ (contains different info)

curl --manual | less

September 8, 2011 | beerpla.net

Today, I was looking for a quick way to see HTTP response codes of a bunch of urls. Naturally, I turned to the curl command, which I would usually use like this:

curl -IL "URL"This command would send a HEAD request (-I), follow through all redirects (-L), and display some useful information in the end. Most of the time it's ideal:

curl -IL "http://www.google.com"HTTP/1.1 200 OK

Date: Fri, 11 Jun 2010 03:58:55 GMT

Expires: -1

Cache-Control: private, max-age=0

Content-Type: text/html; charset=ISO-8859-1

Server: gws

X-XSS-Protection: 1; mode=block

Transfer-Encoding: chunkedHowever, the server I was curling didn't support HEAD requests explicitly. Additionally, I was really only interested in HTTP status codes and not in the rest of the output. This means I would have to change my strategy and issue GET requests, ignoring HTML output completely.

Curl manual to the rescue. A few minutes later, I came up with the following, which served my needs perfectly:

curl -sL -w "%{http_code} %{url_effective}\\n" "URL" -o /dev/nullHere is a sample of what comes out:

curl -sL -w "%{http_code} %{url_effective}\\n" "http://www.amazon.com/Kindle-Wireless-Reading-Display-Generation/dp/B0015T963C?tag=androidpolice-20" -o /dev/null200 http://www.amazon.com/Kindle-Wireless-Reading-Display-Generation/dp/B0015T963C

Here, -s silences curl's progress output, -L follows all redirects as before, -w prints the report using a custom format, and -o redirects curl's HTML output to /dev/null.

Here are the other special variables available in case you want to customize the output some more:

•url_effective

•http_code

•http_connect

•time_total

•time_namelookup

•time_connect

•time_pretransfer

•time_redirect

•time_starttransfer

•size_download

•size_upload

•size_header

•size_request

•speed_download

•speed_upload

•content_type

•num_connects

•num_redirects

•ftp_entry_pathIs there a better way to do this with curl? Perhaps, but this way offers the most flexibility, as I am in control of all the formatting.

● ● ●

is a San Francisco programmer, blogger, and future millionaire (that last part is in the works). Follow Artem on Twitter (@ArtemR) or subscribe to the RSS feed.In the meantime, if you found this article useful, feel free to buy me a cup of coffee below.

nextthing.org

Want to examine the headers of a site for yourself? Try curl:

curl -i http://www.nextthing.org/In the output of the above the first few lines are the headers, then there are a couple of line breaks, and then the body. If you just want to see the headers, and not the body, use the -I option instead of -i. Be forewarned, however, that some servers return different headers in this case, as curl will be requesting the data using a HEAD request rather than a GET request.

What I did to gather all of these headers was very similar. First, I downloaded an RDF dump of the Open Directory Project's directory, and pulled out every URL from that file. Then, I stuck all of the domain names of these URL's in a big database. A simple multithreaded Python script was used to download all of the index pages of these URL's using PycURL and stick the headers and page contents in a database. When that was done, I had a database with 2,686,155 page responses and 23,699,737 response headers. The actual downloading of all of this took about a week.

This is, of course, not anywhere near a comprehensive survey of the web. Netcraft received responses from 70,392,567 sites in its August 2005 web survey, so I hit around 3.8% of them. Not bad, but I'm sure there's a lot of interesting stuff I'm missing.

October 6, 2006

As you may have noticed, I've changed my web site's domain recently. Therefore, I had to redirect all requests to the new address. This has been done and it works as expected, but how about taking a closer look at the HTTP responses the web server returns to the client if an old URL is requested?

This is where CURL comes handy once again. CURL's command line options include two very useful switches, -I and -L:

- -I : when used, CURL prints only the server response's HTTP headers, instead of the page data.

- -L : if the initial web server response indicates that the requested page has moved to a new location (redirect), CURL's default behaviour is not to request the page at that new location, but just print the HTTP error message. This switch instructs CURL to make another request asking for the page at the new location whenever the web server returns a 3xx HTTP code.

The combination of the above two switches results in having all the server responses' headers printed to the terminal, until CURL receives a code other than 3xx.

So, here is a real-life example. I have made two major changes to my web site so far; one included a URL structure modification when I moved from a pure HTML web site to WordPress, while the second was a domain change from the free domain raoul.shacknet.nu to the current paid domain name. So, this makes at least two permanent redirections. I say "at least", because other necessary redirections could take place as well, for example, redirections of the example.org version of the domain to the www.example.org version, or redirections required for permalinks.

So, here is CURL's output when I requested an old page of my web site:

$ curl -I -L http://raoul.shacknet.nu/servers/vnc.htmlHTTP/1.1 301 Moved Permanently Date: Fri, 06 Oct 2006 01:49:06 GMT Server: Apache/2.2.2 Location: http://www.raoul.shacknet.nu/servers/vnc.html Connection: close Content-Type: text/html; charset=iso-8859-1 HTTP/1.1 301 Moved Permanently Date: Fri, 06 Oct 2006 01:49:06 GMT Server: Apache/2.2.2 Location: http://www.g-loaded.eu/servers/vnc.html Connection: close Content-Type: text/html; charset=iso-8859-1 HTTP/1.1 301 Moved Permanently Date: Fri, 06 Oct 2006 01:49:06 GMT Server: Apache/2.2.2 Location: http://www.g-loaded.eu/2005/11/10/configure-vnc-server-in-fedora/ Connection: close Content-Type: text/html; charset=iso-8859-1 HTTP/1.1 200 OK Date: Fri, 06 Oct 2006 01:49:06 GMT Server: Apache/2.2.2 X-Powered-By: PHP/5.1.4 X-Pingback: http://www.g-loaded.eu/xmlrpc.php Status: 200 OK Connection: close Content-Type: text/html; charset=UTF-8The above output shows that the client has to send 4 HTTP requests and receive 4 respective responses from the server in order to reach that old page's final location:

- The first server response informs the client that the page has been permanently moved to the www version of the old domain.

- The second response indicates that the page has been permanently moved to the www version of the new domain using the old URL structure.

- The third indicates a permanent move to the new URL structure.

- The final response informs the client with a 200 OK code that it has reached the page's final location.

In this example, CURL provides a clear view of what situation a browser, a search engine bot or any other HTTP client encounters when it tries to reach an old page.

I am not an expert, but I guess that too many redirects are not a good thing, not only by considering the small increase of the server load, but also that search engine bots might not like them very much. On the other hand, redirections are a necessary evil if you want links from other websites pointing to your old domain or your old URL structure to continue to be valid, which in fact adds "value" to your website, as search engine experts would say. So it's a matter of choice.

comp.unix.shell

I am not sure if this is the best newsgroup for my question, so feel

free to redirect me.I am trying to manipulate my ADSL modem via its web interface in a

shell script. Basically, I need to disable and reactive a certain

setting, something called ZIPB mode. I am assigned a dynamic IP by my

ISP, and when this changes, I need to reactivate ZIPB on the modem

manually (an unfortunate limitation on the manufacturer's part).The HTML form looks like this:

# <i>disabled.</i>

# <FORM method="post" ACTION="/configuration/zipb.html/enable">

# <INPUT type="hidden" name="EmWeb_ns:vim:

4.ImZipbAgent:enabled" value="true">

# <INPUT type="hidden" name="EmWeb_ns:vim:3" value="/

configuration/zipb.html">

# <INPUT type="submit" value="Enable">

# </FORM>

So, I try something like this in my shell script:

#!/usr/local/bin/bash

brackets () {

}

sed -e '

s/</</g

s/>/>/g

s,/,%2f,g

s/?/%3f/g

s/:/%3a/g

s/@/%40/g

s/&/%26/g

s/=/%3d/g

s/+/%2b/g

s/\$/%24/g

s/,/%2c/g

s/ /%20/g

'

input1=$(echo "EmWeb_ns:vim:4.ImZipbAgent:enabled"|brackets)"="$(echo

"true"|brackets)

input2=$(echo "EmWeb_ns:vim:3"|brackets)"="$(echo "/configuration/

zipb.html"|brackets)

input3="Enable"curl -d '"'$input1"&"$input2"&"$input3'"'

http://$user:$pass@copperjet/configuration/zipb.html/enable >2&1> /dev/

nullbut all I get is a rather unhelpful reply:

curl: (52) Empty reply from server

Can anyone suggest ways of debugging this and/or easier ways to go

about this?

On a related note, I see that there is a book called from No Starch

called "Webbots, Spiders, and Screen Scrapers: A Guide to Developing

Internet Agents with PHP/CURL" which looks like it covers these techniques, but

a) I am not to keen on buying a book on a topic which I don't plan to

pursue further than this simple task, and

b) I am likewise not so keen on having to learn PHP just to enable me

to do this.

Thanks for any ideas,

-- Colin

Google matched content |

cURL - Wikipedia, the free encyclopedia

15 Practical Linux cURL Command Examples (cURL Download Examples)

cURL - Programs-Scripts Using Curl!

ftproxy.pl forward standard GET proxy requests to a user@host ftp proxy Björn Stenberg Curl::easy perl module for libcurl Georg Horn

cURL - Manual

cURL - TutorialcURL - Manual

WWWCurl - Perl extension interface for libcurl - search.cpan.org

http://curl.haxx.se/docs/httpscripting.html

The standard Perl WWW module, LWP should be used in most cases to work with the HTTP or FTP protocol from Perl. However, there are some cases where LWP doesn't perform well. One is speed and the other is paralellism. WWW::Curl is much faster, uses much less CPU cycles and it's capable of non-blocking parallel requests.In some cases, for example when building a web crawler, cpu usage and parallel downloads are important considerations. It can be desirable to use WWW::Curl to do the heavy-lifting of a large number of downloads and wrap the resulting data into a Perl-friendly structure by HTTP::Response.

Getleft Tcl/Tk site grabber powered by Curl

PycURLA Python module interface to the cURL library.

HTTP extension for PHP Extended HTTP functionality for PHP.

curlpp A C++ wrapper for libcurl.

CurlFtpFS An FTP filesystem based on cURL and FUSE.

Curl HTTP Client - A simple but powerful curl-based HTTP client.

REXX/CURL - A Rexx external function package that provides an interface to the cURL package.

LATEST VERSION

You always find news about what's going on as well as the latest versions from the curl web pages, located at:

SIMPLE USAGE

Get the main page from netscape's web-server:

Get the root README file from funet's ftp-server:

curl ftp://ftp.funet.fi/README

Get a web page from a server using port 8000:

curl http://www.weirdserver.com:8000/

Get a list of the root directory of an FTP site:

curl ftp://cool.haxx.se/

Get a gopher document from funet's gopher server:

Get the definition of curl from a dictionary:

curl dict://dict.org/m:curl

Fetch two documents at once:

curl ftp://cool.haxx.se/ http://www.weirdserver.com:8000/

DOWNLOAD TO A FILE

Get a web page and store in a local file:

curl -o thatpage.html http://www.netscape.com/

Get a web page and store in a local file, make the local file get the name of the remote document (if no file name part is specified in the URL, this will fail):

curl -O http://www.netscape.com/index.html

Fetch two files and store them with their remote names:

curl -O www.haxx.se//index.html -O curl.haxx.se//download.html

USING PASSWORDS

FTP

To ftp files using name+passwd, include them in the URL like:

curl ftp://name:passwd@machine.domain:port/full/path/to/file

or specify them with the -u flag like

curl -u name:passwd ftp://machine.domain:port/full/path/to/file

HTTP

The HTTP URL doesn't support user and password in the URL string. Curl does support that anyway to provide a ftp-style interface and thus you can pick a file like:

curl http://name:passwd@machine.domain/full/path/to/file

or specify user and password separately like in

curl -u name:passwd http://machine.domain/full/path/to/file

NOTE! Since HTTP URLs don't support user and password, you can't use that style when using Curl via a proxy. You must use the -u style fetch during such circumstances.

HTTPS

Probably most commonly used with private certificates, as explained below.

GOPHER

Curl features no password support for gopher.

PROXY

Get an ftp file using a proxy named my-proxy that uses port 888:

curl -x my-proxy:888 ftp://ftp.leachsite.com/README

Get a file from a HTTP server that requires user and password, using the same proxy as above:

curl -u user:passwd -x my-proxy:888 http://www.get.this/

Some proxies require special authentication. Specify by using -U as above:

curl -U user:passwd -x my-proxy:888 http://www.get.this/

See also the environment variables Curl support that offer further proxy control.

RANGES

With HTTP 1.1 byte-ranges were introduced. Using this, a client can request to get only one or more subparts of a specified document. Curl supports this with the -r flag.

Get the first 100 bytes of a document:

curl -r 0-99 http://www.get.this/

Get the last 500 bytes of a document:

curl -r -500 http://www.get.this/

Curl also supports simple ranges for FTP files as well. Then you can only specify start and stop position.

Get the first 100 bytes of a document using FTP:

curl -r 0-99 ftp://www.get.this/README

UPLOADING

FTP

Upload all data on stdin to a specified ftp site:

curl -T - ftp://ftp.upload.com/myfile

Upload data from a specified file, login with user and password:

curl -T uploadfile -u user:passwd ftp://ftp.upload.com/myfile

Upload a local file to the remote site, and use the local file name remote too:

curl -T uploadfile -u user:passwd ftp://ftp.upload.com/

Upload a local file to get appended to the remote file using ftp:

curl -T localfile -a ftp://ftp.upload.com/remotefile

Curl also supports ftp upload through a proxy, but only if the proxy is configured to allow that kind of tunneling. If it does, you can run curl in a fashion similar to:

curl --proxytunnel -x proxy:port -T localfile ftp ftp.upload.com

HTTP

Upload all data on stdin to a specified http site:

curl -T - http://www.upload.com/myfile

Note that the http server must've been configured to accept PUT before this can be done successfully.

For other ways to do http data upload, see the POST section below.

VERBOSE / DEBUG

If curl fails where it isn't supposed to, if the servers don't let you in, if you can't understand the responses: use the -v flag to get verbose fetching. Curl will output lots of info and what it sends and receives in order to let the user see all client-server interaction (but it won't show you the actual data).

curl -v ftp://ftp.upload.com/

To get even more details and information on what curl does, try using the --trace or --trace-ascii options with a given file name to log to, like this:

curl --trace trace.txt www.haxx.se

DETAILED INFORMATION

Different protocols provide different ways of getting detailed information about specific files/documents. To get curl to show detailed information about a single file, you should use -I/--head option. It displays all available info on a single file for HTTP and FTP. The HTTP information is a lot more extensive.

For HTTP, you can get the header information (the same as -I would show) shown before the data by using -i/--include. Curl understands the -D/--dump-header option when getting files from both FTP and HTTP, and it will then store the headers in the specified file.

Store the HTTP headers in a separate file (headers.txt in the example):

curl --dump-header headers.txt curl.haxx.se/

Note that headers stored in a separate file can be very useful at a later time if you want curl to use cookies sent by the server. More about that in the cookies section.

POST (HTTP)

It's easy to post data using curl. This is done using the -d <data> option. The post data must be urlencoded.

Post a simple "name" and "phone" guestbook.

curl -d "name=Rafael%20Sagula&phone=3320780" http://www.where.com/guest.cgiHow to post a form with curl, lesson #1:

Dig out all the <input> tags in the form that you want to fill in. (There's a perl program called formfind.pl on the curl site that helps with this).

If there's a "normal" post, you use -d to post. -d takes a full "post string", which is in the format

<variable1>=<data1>&<variable2>=<data2>&...

The 'variable' names are the names set with "name=" in the <input> tags, and the data is the contents you want to fill in for the inputs. The data must be properly URL encoded. That means you replace space with + and that you write weird letters with %XX where XX is the hexadecimal representation of the letter's ASCII code.

Example:

(page located at http://www.formpost.com/getthis/

<form action="post.cgi" method="post"> <input name=user size=10> <input name=pass type=password size=10> <input name=id type=hidden value="blablabla"> <input name=ding value="submit"> </form>We want to enter user 'foobar' with password '12345'.

To post to this, you enter a curl command line like:

curl -d "user=foobar&pass=12345&id=blablabla&dig=submit" (continues) http://www.formpost.com/getthis/post.cgiWhile -d uses the application/x-www-form-urlencoded mime-type, generally understood by CGI's and similar, curl also supports the more capable multipart/form-data type. This latter type supports things like file upload.

-F accepts parameters like -F "name=contents". If you want the contents to be read from a file, use <@filename> as contents. When specifying a file, you can also specify the file content type by appending ';type=<mime type>' to the file name. You can also post the contents of several files in one field. For example, the field name 'coolfiles' is used to send three files, with different content types using the following syntax:

curl -F "[email protected];type=image/gif,fil2.txt,fil3.html" http://www.post.com/postit.cgiIf the content-type is not specified, curl will try to guess from the file extension (it only knows a few), or use the previously specified type (from an earlier file if several files are specified in a list) or else it will using the default type 'text/plain'.

Emulate a fill-in form with -F. Let's say you fill in three fields in a form. One field is a file name which to post, one field is your name and one field is a file description. We want to post the file we have written named "cooltext.txt". To let curl do the posting of this data instead of your favourite browser, you have to read the HTML source of the form page and find the names of the input fields. In our example, the input field names are 'file', 'yourname' and 'filedescription'.

curl -F "[email protected]" -F "yourname=Daniel" -F "filedescription=Cool text file with cool text inside" http://www.post.com/postit.cgiTo send two files in one post you can do it in two ways:

- Send multiple files in a single "field" with a single field name:

curl -F "[email protected],cat.gif"

2. Send two fields with two field names:

curl -F "[email protected]" -F "[email protected]"

REFERRER

A HTTP request has the option to include information about which address that referred to actual page. Curl allows you to specify the referrer to be used on the command line. It is especially useful to fool or trick stupid servers or CGI scripts that rely on that information being available or contain certain data.

curl -e www.coolsite.com http://www.showme.com/

NOTE: The referer field is defined in the HTTP spec to be a full URL.

USER AGENT

A HTTP request has the option to include information about the browser that generated the request. Curl allows it to be specified on the command line. It is especially useful to fool or trick stupid servers or CGI scripts that only accept certain browsers.

Example:

curl -A 'Mozilla/3.0 (Win95; I)' http://www.nationsbank.com/

Other common strings:

'Mozilla/3.0 (Win95; I)' Netscape Version 3 for Windows 95 'Mozilla/3.04 (Win95; U)' Netscape Version 3 for Windows 95'Mozilla/2.02 (OS/2; U)' Netscape Version 2 for OS/2 'Mozilla/4.04 [en] (X11; U; AIX 4.2; Nav)' NS for AIX 'Mozilla/4.05 [en] (X11; U; Linux 2.0.32 i586)' NS for LinuxNote that Internet Explorer tries hard to be compatible in every way: 'Mozilla/4.0 (compatible; MSIE 4.01; Windows 95)' MSIE for W95

Mozilla is not the only possible User-Agent name: 'Konqueror/1.0' KDE File Manager desktop client 'Lynx/2.7.1 libwww-FM/2.14' Lynx command line browser

COOKIES

Cookies are generally used by web servers to keep state information at the client's side. The server sets cookies by sending a response line in the headers that looks like 'Set-Cookie: <data>' where the data part then typically contains a set of NAME=VALUE pairs (separated by semicolons ';' like "NAME1=VALUE1; NAME2=VALUE2;"). The server can also specify for what path the "cookie" should be used for (by specifying "path=value"), when the cookie should expire ("expire=DATE"), for what domain to use it ("domain=NAME") and if it should be used on secure connections only ("secure").

If you've received a page from a server that contains a header like:

Set-Cookie: sessionid=boo123; path="/foo";

it means the server wants that first pair passed on when we get anything in a path beginning with "/foo".

Example, get a page that wants my name passed in a cookie:

curl -b "name=Daniel" www.sillypage.com

Curl also has the ability to use previously received cookies in following sessions. If you get cookies from a server and store them in a file in a manner similar to:

curl --dump-header headers www.example.com

... you can then in a second connect to that (or another) site, use the cookies from the 'headers' file like:

curl -b headers www.example.com

While saving headers to a file is a working way to store cookies, it is however error-prone and not the prefered way to do this. Instead, make curl save the incoming cookies using the well-known netscape cookie format like this:

curl -c cookies.txt www.example.com

Note that by specifying -b you enable the "cookie awareness" and with -L you can make curl follow a location: (which often is used in combination with cookies). So that if a site sends cookies and a location, you can use a non-existing file to trigger the cookie awareness like:

curl -L -b empty.txt www.example.com

The file to read cookies from must be formatted using plain HTTP headers OR as netscape's cookie file. Curl will determine what kind it is based on the file contents. In the above command, curl will parse the header and store the cookies received from www.example.com/. curl will send to the server the stored cookies which match the request as it follows the location. The file "empty.txt" may be a non-existant file.

Alas, to both read and write cookies from a netscape cookie file, you can set both -b and -c to use the same file:

curl -b cookies.txt -c cookies.txt www.example.com

PROGRESS METER

The progress meter exists to show a user that something actually is happening. The different fields in the output have the following meaning:

% Total % Received % Xferd Average Speed Time Curr. Dload Upload Total Current Left Speed 0 151M 0 38608 0 0 9406 0 4:41:43 0:00:04 4:41:39 9287From left-to-right:

% - percentage completed of the whole transfer Total - total size of the whole expected transfer % - percentage completed of the download Received - currently downloaded amount of bytes % - percentage completed of the upload Xferd - currently uploaded amount of bytes Average Speed Dload - the average transfer speed of the download Average Speed Upload - the average transfer speed of the uploadTime Total - expected time to complete the operation Time Current - time passed since the invoke Time Left - expected time left to completetion Curr.Speed - the average transfer speed the last 5 seconds (the first

5 seconds of a transfer is based on less time of course.)

The -# option will display a totally different progress bar that doesn't need much explanation!

SPEED LIMIT

Curl allows the user to set the transfer speed conditions that must be met to let the transfer keep going. By using the switch -y and -Y you can make curl abort transfers if the transfer speed is below the specified lowest limit for a specified time.

To have curl abort the download if the speed is slower than 3000 bytes per second for 1 minute, run:

curl -Y 3000 -y 60 www.far-away-site.com

This can very well be used in combination with the overall time limit, so that the above operatioin must be completed in whole within 30 minutes:

curl -m 1800 -Y 3000 -y 60 www.far-away-site.com

Forcing curl not to transfer data faster than a given rate is also possible, which might be useful if you're using a limited bandwidth connection and you don't want your transfer to use all of it (sometimes referred to as "bandwith throttle").

Make curl transfer data no faster than 10 kilobytes per second:

curl --limit-rate 10K www.far-away-site.com

or

curl --limit-rate 10240 www.far-away-site.com

Or prevent curl from uploading data faster than 1 megabyte per second:

curl -T upload --limit-rate 1M ftp://uploadshereplease.com

When using the --limit-rate option, the transfer rate is regulated on a per-second basis, which will cause the total transfer speed to become lower than the given number. Sometimes of course substantially lower, if your transfer stalls during periods.

CONFIG FILE

Curl automatically tries to read the .curlrc file (or _curlrc file on win32 systems) from the user's home dir on startup.

The config file could be made up with normal command line switches, but you can also specify the long options without the dashes to make it more readable. You can separate the options and the parameter with spaces, or with = or :. Comments can be used within the file. If the first letter on a line is a '#'-letter the rest of the line is treated as a comment.

If you want the parameter to contain spaces, you must inclose the entire parameter within double quotes ("). Within those quotes, you specify a quote as \".

NOTE: You must specify options and their arguments on the same line.

Example, set default time out and proxy in a config file:

#We want a 30 minute timeout: -m 1800 #. .. and we use a proxy for all accesses: proxy = proxy.our.domain.com:8080White spaces ARE significant at the end of lines, but all white spaces leading up to the first characters of each line are ignored.

Prevent curl from reading the default file by using -q as the first command line parameter, like:

curl -q www.thatsite.com

Force curl to get and display a local help page in case it is invoked without URL by making a config file similar to:

#default url to get url = "http://help.with.curl.com/curlhelp.html"You can specify another config file to be read by using the -K/--config flag. If you set config file name to "-" it'll read the config from stdin, which can be handy if you want to hide options from being visible in process tables etc:

echo "user = user:passwd" | curl -K - http://that.secret.site.com

EXTRA HEADERS

When using curl in your own very special programs, you may end up needing to pass on your own custom headers when getting a web page. You can do this by using the -H flag.

Example, send the header "X-you-and-me: yes" to the server when getting a page:

curl -H "X-you-and-me: yes" www.love.com

This can also be useful in case you want curl to send a different text in a header than it normally does. The -H header you specify then replaces the header curl would normally send. If you replace an internal header with an empty one, you prevent that header from being sent. To prevent the Host: header from being used:

curl -H "Host:" www.server.com

FTP and PATH NAMES

Do note that when getting files with the ftp:// URL, the given path is relative the directory you enter. To get the file 'README' from your home directory at your ftp site, do:

curl ftp://user:passwd@my.site.com/README

But if you want the README file from the root directory of that very same site, you need to specify the absolute file name:

curl ftp://user:passwd@my.site.com//README

(I.e with an extra slash in front of the file name.)

FTP and firewalls

The FTP protocol requires one of the involved parties to open a second connction as soon as data is about to get transfered. There are two ways to do this.

The default way for curl is to issue the PASV command which causes the server to open another port and await another connection performed by the client. This is good if the client is behind a firewall that don't allow incoming connections.

curl ftp ftp.download.com

If the server for example, is behind a firewall that don't allow connections on other ports than 21 (or if it just doesn't support the PASV command), the other way to do it is to use the PORT command and instruct the server to connect to the client on the given (as parameters to the PORT command) IP number and port.

The -P flag to curl supports a few different options. Your machine may have several IP-addresses and/or network interfaces and curl allows you to select which of them to use. Default address can also be used:

curl -P - ftp ftp.download.com

Download with PORT but use the IP address of our 'le0' interface (this does not work on windows):

curl -P le0 ftp ftp.download.com

Download with PORT but use 192.168.0.10 as our IP address to use:

curl -P 192.168.0.10 ftp ftp.download.com

NETWORK INTERFACE

Get a web page from a server using a specified port for the interface:

curl --interface eth0:1 http://www.netscape.com/

or

curl --interface 192.168.1.10 http://www.netscape.com/

HTTPS

Secure HTTP requires SSL libraries to be installed and used when curl is built. If that is done, curl is capable of retrieving and posting documents using the HTTPS procotol.

Example:

curl https://www.secure-site.com

Curl is also capable of using your personal certificates to get/post files from sites that require valid certificates. The only drawback is that the certificate needs to be in PEM-format. PEM is a standard and open format to store certificates with, but it is not used by the most commonly used browsers (Netscape and MSIE both use the so called PKCS#12 format). If you want curl to use the certificates you use with your (favourite) browser, you may need to download/compile a converter that can convert your browser's formatted certificates to PEM formatted ones. This kind of converter is included in recent versions of OpenSSL, and for older versions Dr Stephen N. Henson has written a patch for SSLeay that adds this functionality. You can get his patch (that requires an SSLeay installation) from his site at: http://www.drh-consultancy.demon.co.uk/

Example on how to automatically retrieve a document using a certificate with a personal password:

curl -E /path/to/cert.pem:password https://secure.site.com/

If you neglect to specify the password on the command line, you will be prompted for the correct password before any data can be received.

Many older SSL-servers have problems with SSLv3 or TLS, that newer versions of OpenSSL etc is using, therefore it is sometimes useful to specify what SSL-version curl should use. Use -3, -2 or -1 to specify that exact SSL version to use (for SSLv3, SSLv2 or TLSv1 respectively):